As of 8.10 vCloud Director Network Isolation (VCDNI) has been marked as depricated and migration to VXLAN should be made prior to upgrading to vCenter 6.5 and vSphere 6.5. Tom Fojta has a nice blog post about the migration to VXLAN, so I do recommend taking a look into that if you are about to do it.

Migration in itself wasn’t painful and everything went smoothly. We migrated our VCNI backed networks to VXLAN during a maintenance window and had zero issues with the migration process itself. We did find an issue post migration regarding ethernet adapters being disconnected from virtual machines whenever they migrated from one host to another. After a lot of debugging we found the root cause and with VMware support a fix was found that is non-disruptive to production and customer network traffic.

They key to the whole issue was that whenever a host is rebooted after VCNI has been removed from vCloud Director configuration, it’s host key is set to 0x0. This can be checked with the following command when connecting with SSH to a host:

[root@esx-host:~] esxcli vcloud fence getfenceinfo Module Parameters: Host Key: 0x0 Configured LAN MTUs: +------------------------------------------------------------------------------------------+ | LAN ID | - - - - - - - - - - - - - - - - | | MTU | - - - - - - - - - - - - - - - - | +------------------------------------------------------------------------------------------+

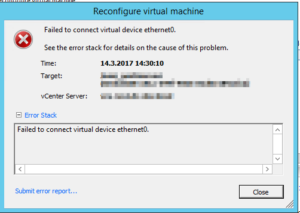

During vMotion from a host with the host key present to one with host key set to 0x0, we would see that the virtual machine will start with ethernet0 as disconnected. When we tried to manually connect the network adapter using vSphere client, we received an error of “Failed to connect virtual device ethernet0”. When trying to enable to adapter /var/log/vmkernel.log showed the following error:

2017-03-14T17:55:14.540Z cpu3:82924)DVFilter: 5472: worldID=82929, vNicIndex=0, filterIndex=1 2017-03-14T17:55:14.540Z cpu3:82924)DVFilter: 5474: name="vsla-fence", onFailure=0, numParams=16 2017-03-14T17:55:14.541Z cpu3:82924)Net: 2441: connected VMNAME (xxxxxxxx-0b31-4e8a-9746-9e0ba9aafa5b).eth0 eth0 to vDS, portID 0x200004d 2017-03-14T17:55:14.541Z cpu3:82924)Net: 3132: associated dvPort 1022 with portID 0x200004d 2017-03-14T17:55:14.541Z cpu3:82924)vsla-fence: Fence_CreateFilter:455: CreateFilter handle:0x4304aceec450 2017-03-14T17:55:14.541Z cpu3:82924)vsla-fence: Fence_CreateFilter:462: Fence create failed. Host key is not configured. 2017-03-14T17:55:14.541Z cpu3:82924)DVFilter: 3776: The fast path agent failed to attach the filter 'vsla-fence' 2017-03-14T17:55:14.541Z cpu3:82924)DVFilter: 5410: Failed to add filter vsla-fence on vNic 0 slot 1: Bad parameter 2017-03-14T17:55:14.541Z cpu3:82924)WARNING: Net: 3178: DVFilterActivateCommon failed for port 0x200004d: Failure 2017-03-14T17:55:14.541Z cpu3:82924)Net: 3640: dissociate dvPort 1022 from port 0x200004d 2017-03-14T17:55:14.541Z cpu3:82924)Net: 3646: disconnected client from port 0x200004d 2017-03-14T17:55:17.301Z cpu37:33491)WARNING: DVFilter: 1192: Couldn't enable keepalive: Not supported

So the VM requested for a parameter in the dvswitch port group that was nolonger supported after migration to VXLAN. If you check your virtual machines .vmx configuration file, you will find the following entries:

ethernet0.filter1.name = "vsla-fence" ethernet0.filter1.param0 = "0x0100000c" ethernet0.filter1.param1 = "1"

To fix the issue, just go to any virtual machine experiencing this issue, open up it’s properties and edit any setting (like name or description) or just check the box to check “Show ethernet adapter type” under hardware. This will make vCloud Director to re-write the .vmx file and remove the entries listed above referencing the vsla-fence that is no-longer supported. Once the entries are removed, migration works without a hitch.

Edit 20.3.2017:: NSX Edge Firewalls seems to still have this problem and this can’t be fixed in the same way. Re-deploy doesn’t get rid of the fencing parameters either.

Using the vSphere Web Client to view Edge Interface properties show these settings:

ethernet2.filter1.name=vsla-fence ethernet2.filter1.param1=1 ethernet2.filter1.param0=0x0100013c

But they can’t be removed using the NSX manager. Will update when I get more information.

Edit 11.12.2017

This case was solved with the assistance of VMware technical support shortly after this post was written. The solution was to use NSX api to update the NSX Edge firewall settings and remove the filter values from the configuration. Once the new configuration was pushed to the NSX edge, you could re-deploy the firewall to apply changes. If you require assistance to do this in a mass, contact VMware technical support. They should be able to provide the same script they custom built for us.

Hi Antti,

Thanks for this post. I plan to migrate a production from VCDNI to VXLAN soon, and it will be useful I guess.

Did you have a chance to find a fix for the NSX Edge Firewalls?

Keep the good work

Hi,

Yea, we found a fix for this. Forgot to update this post about it. We were in contact with VMware technical support and they provided with a script that did required API commands to clear what was needed. I would suggest contacting VMware about this, as they will surely be able to help with you.